Not long ago, a curious moment unfolded in the world of artificial intelligence. News spread quickly that downloads of the Claude application were rising rapidly, even surpassing other well-known AI assistants in certain app stores. Social media buzzed with commentary. Some users were enthusiastic, praising Claude’s writing ability or thoughtful responses. Others were drawn by a different reason. They were reacting to a controversy involving the relationship between AI companies and government power.

Reports circulated about tensions between Anthropic and the United States government regarding the use of AI in military contexts. At the same time, discussions emerged about how different AI systems might be used in geopolitical conflicts. Some commentators framed the situation dramatically, suggesting that the choice of AI platform might carry political meaning. Whether these interpretations were exaggerated or not, the atmosphere felt charged.

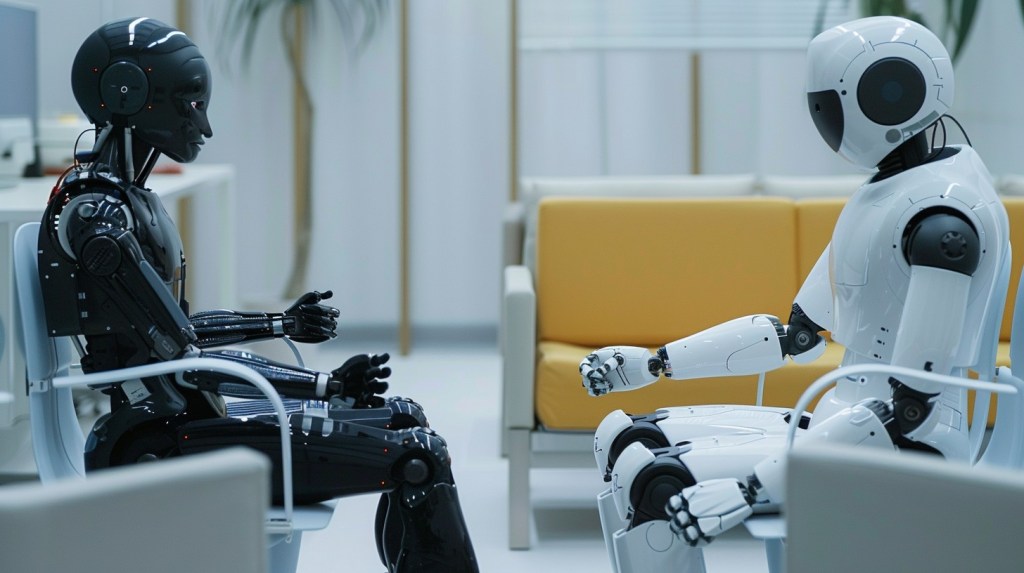

Suddenly, choosing an AI assistant seemed to carry symbolic weight. People compared Claude with ChatGPT as if they represented different philosophies. One platform appeared cautious and safety focused. Another appeared bold and fast moving. Users began to speak about AI systems almost as if they were institutions with their own moral identities.

The moment revealed something interesting. The competition between AI systems was no longer only about technical benchmarks. It was beginning to resemble a conversation about values, power, and the future direction of technology. The rise of one application or another felt less like a simple market shift and more like the emergence of different technological cultures.

This sudden surge of attention may eventually fade, but the questions it raised will likely remain. Artificial intelligence is no longer a single technological project. It is gradually becoming a landscape shaped by different philosophies and different visions of the future.

The Emergence of AI Civilizations

As artificial intelligence develops, distinct approaches are beginning to appear. Each major AI ecosystem seems to reflect the priorities and culture of the organization that created it.

Anthropic has cultivated a reputation for caution. Its researchers often emphasize alignment, safety, and careful deployment. Their work is influenced by a concern that powerful AI systems could create serious risks if developed without restraint. This approach appeals to those who believe technological progress must be accompanied by ethical safeguards.

OpenAI represents a somewhat different energy. Its work has often emphasized rapid capability growth and real world experimentation. Instead of waiting for perfect theoretical solutions, it pushes new models into public use and refines them through experience. This strategy reflects a belief that society learns by interacting with new technologies rather than postponing them indefinitely.

Then there are the institutional giants of the digital world. Companies such as Microsoft and Google are integrating AI into vast ecosystems that already shape everyday life. Their systems appear inside familiar environments such as email, spreadsheets, operating systems, and search engines. Artificial intelligence becomes an additional layer within the infrastructure people already rely on.

Meanwhile, Chinese AI development follows another trajectory. There, artificial intelligence is often viewed through the lens of national strategy and technological independence. The emphasis is on scale, efficiency, and rapid deployment across industries. The technology becomes part of a larger project of economic and technological competition.

Seen together, these approaches resemble the early formation of different technological cultures. Artificial intelligence is not unfolding along a single path. It is branching into multiple directions, each shaped by institutional priorities and cultural assumptions.

The Institutional Frame of AI

Despite these philosophical differences, the way most organizations adopt AI remains surprisingly similar. Inside corporations and government agencies, artificial intelligence often appears through structured programs designed to encourage innovation.

Many companies now organize internal AI competitions. Employees are invited to submit ideas for how artificial intelligence might improve their daily work. The categories are usually familiar. AI can augment human productivity. It can automate repetitive tasks. It can orchestrate complex workflows.

These initiatives generate enthusiasm and creativity. Engineers, analysts, and marketers experiment with new tools. Some of the resulting ideas genuinely improve efficiency. Documents are summarized more quickly. Data analysis becomes faster. Customer service processes are streamlined.

Yet these competitions share an implicit assumption. Artificial intelligence is expected to improve the existing system rather than question it. Employees are asked how AI can make their current workflows better, not whether those workflows should be redesigned entirely.

The effect is subtle but powerful. The imagination of the organization remains bounded by its current structures. AI becomes a helpful assistant within familiar processes rather than a force that might transform them.

Institutions tend to approach new technologies this way because stability matters. Companies must protect existing investments and maintain predictable operations. Radical experimentation carries risks that large organizations are not always prepared to accept.

Still, the result is that much of the early corporate enthusiasm around AI revolves around optimization rather than transformation. The technology becomes a tool for improving the machine rather than reconsidering how the machine works.

The Gravity of Existing Ecosystems

Large technology platforms face a similar tension. Their greatest strength is also their greatest constraint.

Microsoft and Google both possess enormous digital ecosystems. Hundreds of millions of people rely on Microsoft Office, Windows, Gmail, and Google Workspace every day. Integrating artificial intelligence into these environments allows AI to reach an enormous audience almost instantly.

Microsoft’s Copilot, for example, appears inside Word, Excel, and PowerPoint. Google’s Gemini integrates with email, documents, and search. These tools are powerful and practical. They help users write reports, analyze data, and organize information more efficiently.

From a business perspective, this approach is perfectly logical. AI strengthens the value of the existing platform while introducing new capabilities. The familiar environment remains intact, which makes adoption easier for organizations and individual users alike.

Yet this integration also carries a hidden limitation. When AI is embedded within existing software applications, it inherits the assumptions of those applications.

A word processor assumes that writing happens inside a document. A spreadsheet assumes that thinking can be structured into rows and columns. A presentation program assumes that ideas should eventually become slides.

Human thought, however, does not naturally follow those structures. Ideas often emerge through wandering conversations, half formed questions, and unexpected connections. The formats of traditional software reflect practical needs, but they do not fully capture the fluid nature of thinking itself.

When artificial intelligence is confined within these structures, its role becomes limited. It improves productivity inside established frameworks but rarely escapes them.

This is the gravity of existing ecosystems. Their scale is immense, but their conceptual boundaries remain tied to earlier forms of digital work.

Conversation and the Return of Dialogue

Something different began to appear when conversational AI systems entered public life. Interfaces built around dialogue changed the way people interacted with machines.

Instead of navigating menus or filling out forms, users simply began asking questions. They explored ideas through conversation. A request might begin with a vague curiosity and evolve into a longer exchange of thoughts.

This shift may seem simple, but it carries deeper implications. Dialogue has long been one of the most powerful tools for human thinking.

Ancient Greek philosophers often taught through conversation. The Socratic method relied on questions and responses rather than lectures. In religious traditions, debate and commentary helped communities refine their understanding of sacred texts. In Zen Buddhism, brief exchanges between teacher and student sometimes revealed insights that formal explanations could not.

Conversation allows ideas to develop gradually. One thought leads to another. Assumptions are challenged. Unexpected perspectives appear.

Conversational AI systems revive this ancient intellectual rhythm. They create digital spaces where thinking can unfold through dialogue rather than through rigid formats.

Users begin a discussion with a simple question. A response arrives. Another question follows. The exchange evolves into something more complex than the original request.

In this environment, artificial intelligence becomes more than a productivity feature. It becomes part of the process of thinking.

Beyond the Institutional Imagination

This possibility points toward a different vision of artificial intelligence, one that extends beyond the boundaries of institutional thinking.

In many corporate discussions, AI is described primarily as a tool. It speeds up tasks, reduces costs, and improves efficiency. These benefits are real and valuable. They help organizations function more effectively in a competitive world.

Yet the deeper transformation may lie elsewhere. Artificial intelligence may gradually become a partner in the intellectual life of individuals.

When people spend time conversing with AI systems, they often discover that the interaction feels less like operating software and more like engaging in a reflective dialogue. Ideas are tested, refined, and sometimes challenged. Questions that might have remained unspoken begin to take shape.

This emerging relationship can be described as relational AI. The emphasis shifts from automation toward interaction. Intelligence becomes something that unfolds between the human and the machine rather than something delivered by the machine alone.

Such a perspective does not reject institutional uses of AI. Automation, orchestration, and augmentation will continue to play important roles in organizations. Businesses will keep searching for ways to improve productivity and streamline operations.

But alongside these developments, another transformation may take place. Individuals will use AI not only to complete tasks but also to explore ideas, develop insights, and think through complex questions.

This transformation may not arrive through dramatic announcements or technological revolutions. It may unfold gradually through millions of conversations between people and machines.

The recent excitement surrounding Claude and ChatGPT offers only a glimpse of this deeper shift. Beneath the headlines about downloads and competition lies a more subtle question.

Artificial intelligence may not simply change how we work. It may change how we think together.

Image: StockCake